Earlier this year I had a lot of fun using run-time instrumentation and analysis to tackle a variety of program analysis problems. In doing so I became curious about static solutions to the common problems encountered during dynamic analysis. One of the most obvious issues is that you can only examine those paths that you can execute and thus you need to find some way to drive a program through different paths before you can do any kind of analysis. The flip side of that is that you are guaranteed that any path you analyse is valid and so false positives in that regard are avoided. With those experiences in mind I decided to try my hand at purely static analysis recently (recently as in August, I suck at finding time to document stuff apparently). Static analysis has a large variety of sources of error and annoyances of its own but for certain bug classes it seemed like a purely static approach could find some interesting results with a tolerable level of false positives.

Before I started I considered a few different bug classes in terms of the strengths/weaknesses of static analysis. Certain types of vulnerabilities that depend on the values in registers/memory locations are probably best avoided unless you have the time to either implement a hell of theoretical ideas that can limit the innaccuracy or have the time to sift through thousands of false positives. The bug classes I decided to focus on were those that could generally be reduced to deviations from expected orderings of ‘events’ on a path. Such bug classes include use of memory before initialisation, unchecked use of return values from functions, use after X vulnerabilities (where X is an API call that marks an argument as supposedly unusable e.g. free() or fclose()) and incorrectly ordered sequences of system calls. Eventually I settled on looking for use-after-free vulnerabilities, although the code built to do that works for almost all the mentioned bug classes with a little extending.

(Before continuing I should mention exactly what assumptions and bounds I put on the work I wanted to do. Firstly, I decided to do path insensitive analysis – that is I considered all paths through a control flow graph regardless of whether the path condition is satisfiable or not. To do otherwise would have been extra work and for the vulnerability classes I was considering it didn’t turn out to be a big problem.

Secondly, I decided to do data flow analysis over both registers and memory locations. Some static dataflow analysis (I think binaudit) only considers registers, but in my opinion that misses out on a lot of useful information available at practically zero cost. For instance, if we are doing taint analysis and eax is tainted then after mov [ecx+44], eax; I consider the abstract location denoted by the literal [ecx+44] to also be tainted. It remains in the tainted set until the ecx register is untainted but up until that point it is usable as a source of information.

Thirdly, this project was basically just to give me a taste of the practical side of static analysis so spending a month implementing dataflow analysis for the entire x86 instruction set was out of the question. As a result I implemented the data flow analysis for a small subset of the instruction set I considered to be most relevant to the bug classes considered and wrote a conservative default for all other instructions. That is, for any instructions not specifically considered I make an attempt to guess only their destination operand. The effective result of this is that for such instructions the destination will be removed from whatever analysis I’m doing. This potentially introduces false negatives but also frees up about a month of my life. 😉

Fourthly, when considering loops I only expanded them for one iteration. I haven’t thought too much about the implications of this as there didn’t seem to be a better method without investing time into implementing a bounded loop unwinding technique. It would seem like it might introduce false positives but in my testing I did not encounter anything that could be directly traced back to this weakness. It’s hard to tell if that was due to my small test set, the characteristics of the vulnerabilities I was considering or that my conservative data flow analysis hid the potential false positives.

Fifthly (at this point I’m wishing I’d used 1, 2, 3…), I inititally aimed at intraprocedural analysis and then tacked on some interprocedural fun at the end. I’m quite happy with how my interprocedural hackery worked out but there are probably better (and more time consuming) ways to do it.)

Finding equivalent variables

When doing static analysis you can essentially start at any address that suits the problem at hand. This allows us to divide our algorithm for finding use-after-free bugs into two parts (well three actually, but more about that later). The first part of the algorithm is to decide at a free call what memory locations and registers we want to consider free’d. We want to know all variables that contain pointers to the memory location about to be free’d so that we can consider a use of any of these variables to be a bug.

The most common theoretical framework for this problem is called use-def analysis and is common in compiler theory. In such an analysis we take a variable use (e.g. the push of whatever variable is to be free’d) and compute all its definitions at that point by traversing backwards over all paths to that point. We then want to apply this function recursively over all the definition variables we have found, treating them as uses. By modifying the standard use-def algorithm we can do this to find all variables equivalent to the free’d variable.

In my implementation as I traverse the paths backwards from a free call to find definitions I maintain a list of all locations that are used as destination operands in instructions i.e. they are rewritten before the free call. I will refere to this as the clearedVars list. So, if we are looking for the definition of a variable x and it is found at an instruction mov x, y; we know y is equivalent to the free’d variable if and only if y is not a member of clearedVars. Regardless of whether y is in clearedVars or not we then apply our use-def algorithm to find its definitions with the same considerations. This iterative approach is continued until all paths leading to the free call have been analysed and we are left with a set of variables equivalent to the argument to free.

The following snippet of Python code will hopefully help the explanation. It shows the logic applied to the destination operand of a mov instruction.

if dst in state['needDefList']:

src = insDataFlow.getSrcFor(dst)

state['needDefList'].append(src)

if src not in state['clearedVars']:

if src not in self.equivVarList:

self.equivVarList.append(src)

state['needDefList'].remove(dst)

state['clearedVars'].append(dst)

When finding the set of equivalent variables we can generally avoid many sources of false positives in static analysis by accepting false negatives. The main source of false positives I encountered (that weren’t caused by my lack of coding talent) derived from considering paths with unsatisfiable conditions.

So at this point we have an algorithm for computing a set of equivalent variables given a starting point and a source variable. In case it isn’t obvious, I should point out that the equivalent variables along each path should be stored separately. Otherwise it becomes an avoidable source of false positives in the next part of our algorithm.

Finding use-after-free instances

Most of the work for this algorithm is in the previous stage. Once we have computed the equivalent variables per backwards path we are ready to look for uses of these variables in an unsafe context. We also use the data flow information from each instruction to add/remove variables from the set of locations we consider to hold pointers to free’d memory.

For use-after-free vulnerabilities I considered unsafe use to be any instruction that used the free’d pointer in the computation of a memory address. (I also extended eventually this definition to include any instruction that pushed a free’d pointer to the stack). Checking for such a condition is relatively simple. For each instruction we just iterate over all base registers used in computing the pointer and determine if it is in the set of variables we know to hold free’d pointers or not.

def checkInsForFreeVarUse(self, ea, dst, src, state):

for op in (dst, src):

if isinstance(op, TaintLoc_MEMREF_REGS):

# Usage e.g. mov [ebp+10], eax; and ebp is a free'd var

for usedReg in op.regs:

if usedReg in state['freedVars']:

self.b00m(ea, usedReg)

In my code the TaintLoc_MEMREF_REGS class represents an operand that is a memory location constructed using at least one register. The objects src and dst are the source and destination operands for a given instruction (a single source and destination suffices for the instructions instrumented in this case).

That is basically the bones of an algorithm that can operate within the bounds of any function containing a call to free; this is useful in its own right but as I thought about it I began to consider cases where a function may free one of its arguments. This could be a problem in two ways: 1) The programmer using the API call is unaware of this functionality, or forgets, or 2) The function should not free the argument and that it does is a flaw in its implementation. The latter case is more interesting in my opinion but the same approach will detect both.

Function aliases and interprocedural analysis

There is probably some other name for what I’m about to describe but let’s call it a ‘function alias’ for now. True interprocedural analysis of a function f containing a call to free would involve relatively complex analysis of the relationship between non-local variables in f and the variables defined at the call sites of f. Doing this properly is non-trivial but we can perform a more limited analysis with much less effort.

The type of interprocedural analysis I implemented begins with searching for aliases to the API call that we are using as the starting point for our algorithms. For use-after-free bugs this is the free function. Let us consider this trigger function as a tuple (f, n), where f is the function in question and n is the argument we consider to be free’d or closed or whatever the action we are interested in happens to be. An alias for a function (f, n) is then a function (g, m) such that calling the function g with the variable x as argument m results in a call to f with x as argument n. If we are provided with one or more aliases for (f, n) we can then apply the exact same analysis algorithm as described in the previous sections to find interprocedural bugs.

The question then becomes how do we build such a list? The answer is pretty simple at a high level – say we are in a function g and analysing a call to the function f that free’s its nth argument. Our first algorithm will build the set of variables equivalent to the nth argument to f, let’s call it E. If any of g‘s arguments are in this set then we know (g, m) is an alias for (f, n) where m is the index of that variable. The only tricky part of this is tracking the function arguments to g. IDA takes care of this for us and renames memory locations automatically using an identifier similar to arg_X but if using another framework you may have to do that yourself. (This turns out to be trickier than you might think, a description of how the simplex method is used in IDA can be found here.)

Righty, so at this point we can take any function and compute its aliases. For maximum fun we apply this recursively to find aliases for aliases and so on, applying our basic analysis algorithms to each discovered alias in turn.

That basically sums up the details of my approach. I used it to look for use-after-free bugs but with varying amounts of effort it could be changed to look for all sorts of fun stuff.

Testing

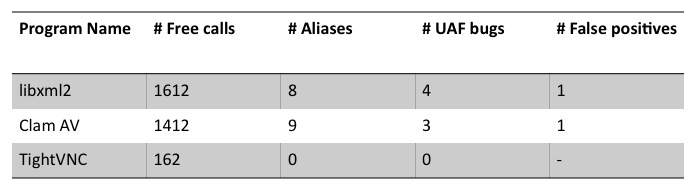

I tested my implementation (~850 lines of Python using IDAPython as a framework) on libxml2, which has a history of use-after-free bugs, Clam Antivirus, which has a history of sucking and TightVNC, because the name makes me giggle.

The following table summarises the results of my rather brief and unscientific testing.

Both libxml2 and Clam AV came up good for a few bugs each and multiple aliases for free. Some of these aliases were obviously on purpose with names like cl_engine_free, whereas others were more difficult to determine. All three results found in Clam AV were as a result of analysis on an alias function so it is possible that this function has unintended side effects. For libxml2 the bugs were split, two resulting from direct calls to free and two resulting from a call to an alias function. Despite its giggle inducing name, TightVNC showed no bugs and, disappointingly, not even an alias to free. For both libxml2 and Clam AV the false positives were as a result of paths with unsatisfiable conditions.

Conclusion

In the above table I’ve purposely used the term ‘bugs’ instead of ‘vulnerabilities’. The reason for this is the same reason that I’ve now moved on to investigating hybrid dynamic/static implementations. Finding a bug using static analysis of the type I have described gets you a location in a binary where some broken coding idiom occurs. This is useful in its own right and all valid bugs detected during my testing are concrete paths leading to use of a dangling pointer.

From an offensive point of view we must consider the issue further though. Without more analysis you have no idea how to trigger this code or even if it is triggerable in a malicious fashion. In fact, as soon as you get over the initial rush of ‘oh look, a shiney new bug’ you quickly realise you have a mountain of work to actual exercise that code, let alone craft an exploit. From a defensive point of view none of this really matters, the code is incorrect regardless and should be fixed but from an exploit writers point of view discovering the bug in this fashion merely puts your shoes on. You still have a marthon to run in order to get to an exploit.

Hi Sean,

Excuse me, because of my english I am spanish.

How is your implementation of Data Flow Analysis ?

Are you using framework DFA with Lattices ?

What is your iterative algorytms?

Are you use a Intermedate Lenguage (IL) like REIL of Zynamics?

Your algorytm is context sensitive and interprocedural?

Could you show me in pseudo code or python?

Are you using SSA form, for taint?.

I’m developing a IR SSA form for platform independence, but my problem is to relate the variables of IL to their respective regions of memory or registers with Data Dependences Graph and create a linear order algorytm O(V), je je je.

Regards.

EDIT: Oops, in my initial reply to this I didn’t actually check what post your comment was on and for some reason thought you were talking about my exploit generation stuff. Lets try again:

How is your implementation of Data Flow Analysis ?

Standard use/def analysis using SSA form.

Are you using framework DFA with Lattices ?

No

What is your iterative algorytms?

It’s explained in the post. It’s not very sophisticated or complex and for a real project like a commercial tool or a thesis you’d have to put in a lot more effort I’m sure.

Are you use a Intermedate Lenguage (IL) like REIL of Zynamics?

Yes, I’m using an IL I developed myself.

Your algorytm is context sensitive and interprocedural?

This is also answered in the post 😛 It is interprocedural in that I consider certain call-site context relating to function aliases but I do not track global variables or modifications through pointers so I miss a lot of data in that sense. It is not path-sensitive though, hence the false positives in my results.

Hello, Sean! I’m from Russia and I explore methods which finding vulnerabilities. I know, that data flow analysis is a great static method for finding vulnerabilities in source code. I’ve read a lot of foreign literature about data-flow analysis, but I still don’t understand – how data-flow analysis can helps in detecting vulnerabiliies? It can helps to see the structure of programm, but its will not show us where is the vulnerability, isn’t it? Maybe You know? 🙂

Hi Sean,

I liked the work described in this post. Generally, the bugs you are describing are found by enforcing type-state checking properties.

A few comments:

“(…) For instance, if we are doing taint analysis and eax is tainted then after mov [ecx+44], eax; I consider the abstract location denoted by the literal [ecx+44] to also be tainted. It remains in the tainted set until the ecx register is untainted but up until that point it is usable as a source of information.”

-> How does this taint analysis algorithm work for loop and arrays? (say: if 44 were replaced by a variable)

“When finding the set of equivalent variables we can generally avoid many sources of false positives in static analysis by accepting false negatives. The main source of false positives I encountered (that weren’t caused by my lack of coding talent) derived from considering paths with unsatisfiable conditions.”

-> Have you considered the use of a constraint solver to eliminate infeasible paths?

“(…) Both libxml2 and Clam AV came up good for a few bugs each and multiple aliases for free. Some of these aliases were obviously on purpose with names like cl_engine_free, whereas others were more difficult to determine. All three results found in Clam AV were as a result of analysis on an alias function so it is possible that this function has unintended side effects.

-> can you be clearer on what you mean by unintended side effects? How do you match the “alias” counter-example to the “real” counter-example?

“For libxml2 the bugs were split, two resulting from direct calls to free and two resulting from a call to an alias function. Despite its giggle inducing name, TightVNC showed no bugs and, disappointingly, not even an alias to free. For both libxml2 and Clam AV the false positives were as a result of paths with unsatisfiable conditions.”

-> Have you analyzed those results in more details, especially for TightVNC? Does your analyzer reports no bug because the codebase is much smaller / safer, or because of an expected(but hopefully liftable) limitation of your tool?

I did not have the chance to look at the bugs you found as no information is given from the post.

I would be interested to see how you can make use of your exploit generation work combined with this static analyzer can bring a result on simple examples.

-jv

Hey,

-> How does this taint analysis algorithm work for loop and arrays? (say: if 44 were replaced by a variable)

Poorly. In fact, this analysis when applied over loops was a rather annoying source of false positives when I dug up this code earlier in the week. I’m actually going back over this problem at the moment with the goal of doing it properly as opposed to a hacked together couple-of-day project in IDAPython so I’ll actually consider these issues as opposed to blissfully ignoring them.

-> Have you considered the use of a constraint solver to eliminate infeasible paths?

Yup. It was basically the first idea to pop into my head when I ran into flow sensitivity issues. For this code I whipped up a pretty basic IL over a few instructions in Python and I need to extend it to deal with a greater portion of x86 before I consider generating correct path conditions (or consider building any more algorithms on it for that matter). I’ve been pretty busy with more immediate concerns (read: work) for the past 6 months

-> can you be clearer on what you mean by unintended side effects? How do you match the “alias” counter-example to the “real” counter-example?

By ‘unintended side effects’ I merely meant that some functions under certain conditions seemed to free a parameter passed to that function and this wasn’t taken into account in the calling context. As for matching the counter-examples, it was pretty simple as I stored all the relevant alias information as it was discovered.

-> Have you analyzed those results in more details, especially for TightVNC? Does your analyzer reports no bug because the codebase is much smaller / safer, or because of an expected(but hopefully liftable) limitation of your tool?

Unfortunately not. I really did just pick TightVNC fairly randomly and I don’t have a huge interest in auditing it for bugs.

Sean, thanks for the answers.

How do you manage to have so few false positives if you do not use a constraint solver to eliminate infeasible paths?

Also, do you think you can quantify the number of false negatives by using your technique? Aliasing constraints at the binary level are even harder to track than at source level (where it is already a problem) and I suspect your tool is only able to find the low hanging fruits (which may good enough for your purpose).

It would be useful to have more data from your analysis on larger and well audited programs as to compare properly with other verification techniques and processes that are in place at various R&D labs across the industry and academy : not many people are sticking to dataflow analysis as an end-user technique because DFA tend to be noisy and poorly configurable. Other techniques such as model checking or theorem proving tend to lift those limitations.

Cheers

-jv

You are correct in that this approach will target only low hanging fruit and from this comes the low level of false positives. The reason for this, of course, is that my analysis is a huge underapproximation of the programs behaviour. I’m sure if I were to make my analysis more conservative and target more complex vulnerable paths then the false positive rate would spike due to my rather simplistic algorithms.

Naturally a better approach to this, and one I’m slowly working on when I have free time, involves theorem proving over formulae built from context-senstive/flow sensitive analysis of a binary. Doing this correctly is a much more involved process though and one I wasn’t ready to invest the time in when I hacked up the scripts mentioned above. At a minimum one needs some sort of IL to support construction of use-def chains, points-to analysis and other similar things. At a more advanced level, and in order to find the kinds of UAF bugs we typically discover manually, there will need to be some concept of threads and concurrent execution as well as models of data structures like hash tables and so on. If you decide to drag C++ into the mix then a whole world of other problems accompany. So yea… that will take a bit of time 🙂

I wouldn’t worry about comparing these results to those of R&D labs as I have implemented an incredibly basic subset of what one one expect from a group of PhD researchers. If I get around to ever finishing a more interesting implementation I’ll let you know 🙂