Day 5

Sketching: Program Synthesis using SAT Solvers (Armando Solar-Lezama)

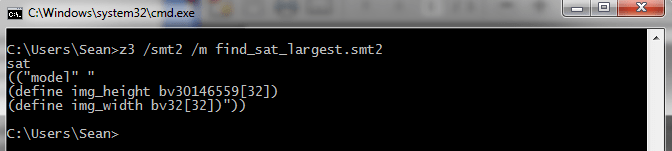

Armando started his talk by demonstrating the automatic synthesis of a program for swapping two integer variables without using a third. It’s a standard algorithm and quite small but was still cool to see. He then demonstrated a few more algorithms involving bit-level arithmetic. The implementation of this tool, called Sketch, can be found here. The demonstrations given were for a C-like language and apparently synthesis works quite well for algorithms based around bit-twiddling.

These programs were generated from program ‘sketches’, essentially algorithmic skeletons, and a test harness, similar to unit tests, that described the desired semantics of the program. The sketches express the high level structure of the program and then the details are synthesized using a SAT solver and a refinement loop driven by the tests. The idea of the sketches is to make the problem tractable. The intuition for this was given by the example of curve fitting. That can be a difficult problem if you have nothing to go on but data points whereas if you are told the curve is Gaussian, for example, the problem becomes much more feasible.

The synthesis algorithm first uses the sketched fragment to generate a candidate program and then a SAT solver is invoked to see if the conjunction of this program and the semantics described by the tests are valid. If not, a counter-example is generated and used to refine the next iteration of program generation. This is incredibly simplified and the full details can be found in Armando’s thesis.

This was yet another talk where there was an emphasis on pre-processing formulae before they get to the solver. The phrase ‘aggressive simplification’ was used over and over throughout the conference and for synthesis this involved dataflow analysis and expression reduction (e.g. y AND 1 reduces to y) as well as more standard common sub-expression elimination.

Day 6

Harnessing SMT power using the verification engine Boogie (Rustan Leino)

This talk began with some coding demonstrations in a language called Dafny that has support for function pre-conditions, post-conditions and loop invariants. As these features are added to a code-base they are checked in real time. Dafny is translated into an intermediate verification language (IVL) called Boogie (the verification system for it is open source under the MS public license) which can be converted into SMT form and then checked using the Z3 SMT solver. While this fun to watch, most languages don’t have these inbuilt constructs for pre/post-conditions and invariants. Fortunately, Boogie is designed to be a generic IVL and translation tools exist for C, C++, C#, x86 and a variety of other languages (although from what I gather only some of these are publicly available and none are open source). As such, Boogie is designed to separate the verification of programs in a given language from the effort of converting them into a form that is amenable to checking.

The high level, take-away message from this talk was “Don’t go directly to the SMT solver”. It relates to the separation of concerns I just mentioned. This lets you share infrastructure and code for verification tasks that will be common between many languages and also means you have an intermediate form to perform simplification on before passing any formulae to a solver.

HAVOC: SMT solvers for precise and scalable reasoning of programs (Shuvendu Lahiri & Shaz Qadeer)

HAVOC is one such verification tool for C that makes use of Boogie. It adds support for user-defined contracts on C code that can then be checked. Based on the Houdini algorithm HAVOC can also perform contract inference with the aim of alleviating much of the burden on the user.

I really wish we had something similar to HAVOC for code auditing (this was actually one of the use cases mentioned during the talk). I’m not sure about others but essentially how I audit source code involves manually coming up with pre-conditions, post-conditions and invariants and then trying to verify these across the entire code-base by hand. This is fine, but with a tool-set of vim, ctags and cscope it’s also incredibly manual and seems like something that could at least be partially automated. It was mentioned that a more up-to-date version of HAVOC might be released soon so maybe this will be a possibility.

Non-DPLL Approaches to Boolean SAT Solving (Bart Selman & Carla Gomes)

This talk was on probabilistic approaches to SAT solving. These techniques still lag far behind DPLL based algorithms on industrial benchmarks but are apparently quite good on random instances with large numbers of variables.

Symbolic Execution and Automated Exploit Generation (David Brumley)

While I previously ranted about the paper this talk was based on, this talk was a far better portrayal of the research. Effectively, we’re in the very, very early stages of exploit generation research; while there have been some cool demos of how solvers might come into play we’re still targeting the most basic of vulnerabilities and in toy environments. All research has to start somewhere though, my own thesis was no more advanced, so it was good to see this presented with an honest reflection on how much work is left.

One interesting feature of the CMU work is preconditioned symbolic execution which adds preconditions to paths that must be satisfied for the path to be explored. This is a feature missing from KLEE but would be just as useful in symbolic execution for bug finding as well as exploit generation. Something that remains to be researched and discussed is efficient ways to come up with these pre-conditions.

Conclusion

The summer school was a great event and renewed my enthusiasm for formal methods as a feasible and cost effective basis for bug finding and exploit development. The best talks were those that presented an idea, gave extensive, concrete data to back it up and explained the core concepts and limitations with real world examples. I hope to see more papers and talks like this in the future.

A generic conclusion for the six days would be difficult, so instead the following were the reoccurring themes that stood out to me across the talks that may be relevant to someone implementing these systems:

– Focus on one thing and do it well. For example, separate instrumentation from symbolic execution from solving formulae.

– Aggressively simplify before invoking a solver. Simplification strategies varied from domain specific e.g. data-flow analysis, to generic logical reductions but all of them greatly reduced the complexity of the problems that solvers had to deal with and thus increased the problems the tools could handle.

– Abstract, refine and repeat. The concept of a counter-example guided abstraction refinement loop seemed to be core to algorithms from hardware model checking, to program synthesis, to bug finding. In each, CEGAR was used to scale algorithms to more complex and more numerous problems by abstracting complexity and then reintroducing it as necessary.

– Nothing beats hard data for justifying conclusions and driving new research. This point was made in the earliest talks on comparing SAT solver algorithms and reiterated through the SAGAN information collection/organisation system of SAGE. Designing up front to gather data lets you know where things are going wrong, keeps a record of improvements and makes for some pretty cool slides when you need to convince other people you’re not insane =)