This post is a follow on to my previous ones on automatically determining variable ranges and on uses for solvers in code auditing sessions. In the first of those posts I showed how we can use the symbolic execution engine of ID to automatically model code and then add extra constraints to determine how it restricts the state space for certain variables. In the second I looked at one use case for manual modelling of code and proving properties about it as part of C++ auditing.

In this post I’m going to talk about a problem that lies between the previous two cases. That is, manually modelling code, but using Python classes provided by ID in a much more natural way than with the SMT-LIB language, and looking for optimal solutions to a problem rather than a single one or all possible solutions.

Consider the following code, produced by HexRays decompiler from an x86 binary. It was used frequently throughout the binary in question to limit the ranges allowed by particular variables. The first task is to verify that it does restrict the ranges of width and height as it is designed to. Its purpose is to ensure that v3 * height is less than 0x7300000 where v3 is derived from width.

int __usercall check_ovf(int width, int height,

int res_struct)

{

int v3; // ecx@1

v3 = ((img_width + 31) >> 3) & 0xFFFFFFFC;

*(_DWORD *)(res_struct + 12) = width;

*(_DWORD *)(res_struct + 16) = height;

*(_DWORD *)(res_struct + 20) = v3;

if ( width <= 0 || height <= 0 ) // 1

{

*(_DWORD *)(res_struct + 24) = 0;

*(_DWORD *)(res_struct + 28) = 0;

}

else

{

if ( height * v3 <= 0 || 120586240 / v3 <= height ) // 2

*(_DWORD *)(res_struct + 24) = 0;

else

*(_DWORD *)(res_struct + 24) = malloc_wrapper(res_struct,

120586240 % v3,

height * v3); // 3

*(_DWORD *)(res_struct + 28) = 1;

}

return res_struct;

If the above code reaches the line marked as 3 a malloc call will occur with height * v3 as the size argument. Can this overflow? Given the checks at 1 and 2 it’s relatively clear that this cannot occur but for the purposes of later tasks we will model and verify the code.

One of the things that becomes clear when using the SMT-LIB language (even version 2 which is considerably nicer than version 1) is that using it directly is still quite cumbersome. This is why in recent versions of Immunity Debugger we have added wrappers around the CVC3 solver that allow one to build a model of code using Python expressions (credit for this goes to Pablo who did an awesome job). This was one of the things we covered during the recent Master Class at Infiltrate and people found it far easier than using the SMT-LIB language directly.

Essentially, we have Expression objects that represent variables or concrete values and the operators on these expressions (+, -, %, >> etc) are over-ridden so that they make assertions on the solvers state. For example, if x and y are Expression objects then x + y is also an Expression object representing the addition of x and y in the current solver context. Using the assertIt() function of any Expression object then asserts that condition to hold.

With this in mind, we can model the decompiled code in Python as follows:

import sys

import time

sys.path.append('C:\\Program Files\\Immunity Inc\\Immunity Debugger\\Libs\\x86smt')

from prettysolver import Expression

from smtlib2exporter import SmtLib2Exporter

def check_sat():

img_width = Expression("img_width", signed=True)

img_height = Expression("img_height", signed=True)

tmp_var = Expression("tmp_var", signed=True)

const = Expression("const_val")

(const == 0x7300000).assertIt()

(img_width > 0).assertIt()

(img_height > 0).assertIt()

tmp_var = ((img_width + 31) >> 3) & 0xfffffffc

(img_height * tmp_var > 0).assertIt()

(const / tmp_var > img_height).assertIt()

expr = (((tmp_var * img_height) &

0xffffffff000000000) != 0) # 1

expr.assertIt()

s = SmtLib2Exporter()

s.dump_to_file(expr, 'test.smt2') # 2

# After this we can check with z3 /smt2 /m test.smt2

# Alternatively we can use expr.isSAT which calls CVC3 but it

# is a much slower solver

start_time = time.time()

if expr.isSAT():

print 'SAT'

print expr.getConcreteModel()

else:

print 'UNSAT'

print 'Total run time: %d seconds' % (time.time() - start_time)

if __name__ == '__main__':

check_sat()

The above code (which can be run from the command-line completely independently of Immunity Debugger) models the parts of the decompiled version that we care about. The added condition, marked as 1 checks for integer overflow by performing a 64-bit multiplication and then checking if the upper 32 bits are 0 or not. The first thing to note about this code is that it models the decompiled version quite naturally and is far easier to write and understand than the SMT-LIB alternative. This makes this kind of approach to analysing code much more tractable and means that once you are familiar with the API you can model quite large functions in very little time. For example, asserting that the condition if ( height * v3 <= 0 || 120586240 / v3 <= height ) must be false translates to the following, which is syntactically quite close to the C code:

tmp_var = ((img_width + 31) >> 3) & 0xfffffffc (img_height * tmp_var > 0).assertIt() (const / tmp_var > img_height).assertIt()

Checking if the function does in fact prevent integer overflow is then simple.

So, modulo modelling errors on our behalf, the check is safe and prevents an overflow on the size argument to malloc*. So what now? Well, in the case of this particular code-base an interesting behaviour appeared later in the code if the product of width and height is sufficiently large and the above function succeeded in allocating memory. That is, the height and width were small enough such that height * v3 was less than 0x7300000 but due to multiplication with other non-constants later in the code may then overflow. The question we then want to answer is, what is the maximum value of image * height that can be achieved that also passes the above check?

Solving Optimisation Problems with Universal Quantification**

This problem is essentially one of optimisation. There are many assignments to the input variables that will pass the overflow check but we are interested in those that maximise the resulting product image and height. Naturally this problem can be solved on paper with relative ease for small code fragments but with longer, more complex code this approach quickly becomes an more attractive.

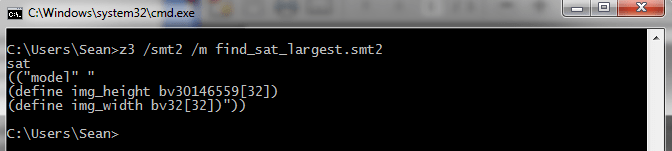

The first thing to note is that at the line marked as 2 in the above Python code we used a useful new feature of ID, the SmtLib2Exporter***, to dump the model constructed in CVC3 out to a file in SMT-LIB v2 syntax. This is useful for two reasons, firstly we can use a solver other than CVC3, e.g. Z3 which is much faster for most problems, and secondly we can manually modify the formula to include things that our Python wrapper currently doesn’t have, such as universal quantification.

Universal quantification, normally denoted by the symbol ∀ and the dual to existential quantification, is used to apply a predicate to all members of a set. e.g. ∀x ∈ N.P(x) states that for all elements x of the natural numbers some predicate P holds. Assume that the conditions of the integer overflow check are embodied in a function called sat_inputs and M is the set of natural numbers module 2^32 then the formula that we want to check is (sat_inputs(x, y) => (∀ a, b ∈ M | sat_inputs(a, b), x * y >= a * b)), that is that we consider x and y to be solutions if x and y satisfy the conditions of sat_inputs implies that the product x * y is greater or equal to the product of any other two values a and b that also satisfy sat_inputs. This property is encoded in the function is_largest in the following SMT-LIB v2 code. The rest of the code is dumped by the previous Python script so checking this extra condition was less than 5 lines of work for us. The details of sat_inputs has been excluded for brevity. It simply encodes the semantics the integer overflow checking code.

(declare-funs ((img_width BitVec[32])(img_height BitVec[32])))

(define-fun sat_inputs ((img_width BitVec[32])(img_height BitVec[32])) Bool

(and

; Model of the code goes here

)

)

(define-fun is_largest ((i BitVec[32])(j BitVec[32])) Bool

(forall ((a BitVec[32]) (b BitVec[32]))

(implies (sat_inputs a b)

(bvsge (bvmul i j) (bvmul a b))

)

)

)

(assert (and

(sat_inputs img_width img_height)

(is_largest img_width img_height)

)

)

(check-sat)

(get-info model)

Running this through Z3 takes 270 seconds (using universal quantification results in a significant increase in the problem size) and we are provided with an assignment to the height and width variables that not only pass the checks in the code but are guaranteed to provide a maximal product. The end result is that with the above two inputs height * width is 0x397fffe0, which is guaranteed to be the maximal product, and height * (((width + 31) >> 3) & 0xfffffffc) is 0x72ffffc, as you would expect, which is less than 0x7300000 and therefore satisfies the conditions imposed by the code. Maximising or minimising other variables or products is similarly trivial, although for such a small code snippet not particularly interesting (Even maximising the product of height and width can be done without a solver in your head pretty easily but instructive examples aren’t meant to be rocket science).

This capability becomes far more interesting on larger or more complex functions and code paths. In such cases the ability to use a solver as a vehicle for precisely exploring the state space of a program can mean the difference between spotting a subtle bug and missing out.

Conclusion

By its nature code auditing is about tracking state spaces. The task is to discover those states implied by the code but not considered by the developer. In the same way that one may look at a painting and discover meaning not intended by the artist, an exploit developer will look at a program and discover a shadow-program, not designed or purposefully created, but in existence nonetheless. In places this shadow-program is thick, it is easily discovered, has many entry points and can be easily leveraged to provide exploit primitives. In other places, this shadow-program clings to the intended program and is barely noticeable. An accidental decrement here, an off-by-one bound there. Easy to miss and perhaps adding few states to the true program. It is from these cases that some of the most entertaining exploits derive. From state spaces that are barren and lacking in easily leveragable primitives. Discovering such gateway states, those that move us from the intended program to its more enjoyable twin, is an exercise in precision. This is why it continues to surprise me that we have such little tool support for truly extending our capacity to deal with massive state spaces in a precise fashion.

Of course we have some very useful features for making a program easier to manually analyse, among them HexRays decompiler and IDA’s various features for annotating and shaping a disassembly, as well as plugin architectures for writing your own tools with Immunity Debugger, IDA and others. What we lack is real, machine driven, assistance in determining the state space of a program and, dually, providing reverse engineers with function and basic block level information on how a given chunk of code effects this state space.

While efforts still need to be made to develop and integrate automation technologies into our workflows I hope this post, and the others, have provided some motivation to build tools that not only let us analyse code but that help us deal with the large state spaces underneath.

* As a side note, Z3 solves these constraints in about half a second. We hope to make it our solving backend pretty soon for obvious reasons.

** True optimisation problems in the domain of satisfiability are different and usually fall under the heading of MaxSAT and OptSAT. The former deals with maximising the number of satisfied clauses while the latter assigns weights to clauses and looks for solutions that minimise or maximise the sum of these weights. We are instead dealing with optimisation within the variables of the problem domain. OptSAT might provide interesting solutions for automatic gadget chaining though and is a fun research area.

*** This will be in the next release which should be out soon. If you want it now just drop me a mail.

Thanks to Rolf Rolles for initially pointing out the usefulness of Z3’s support for universal/existential quantification for similar problems.

Nice! Glad to see you got this working. I’m still stumped on getting universal quantification working properly with the API (rather than the SMTLIB interface) :(. Finicky beasts, SMT solvers are.

‘

Looking forward to more posts along these lines.

Nice post. I really like the concept of “gateway states”.

I don’t claim to have any practical experience with solvers, but when I was messing around with them, modeling code manually, the big problem I ran into was loops. The only solver I found that supported the concept was yices, where you can define recursive functions. Is there something I’m missing – or are you avoiding them, or just unrolling them manually?